Real-time Hand Tracking under Occlusion from an Egocentric RGB-D Sensor

Abstract

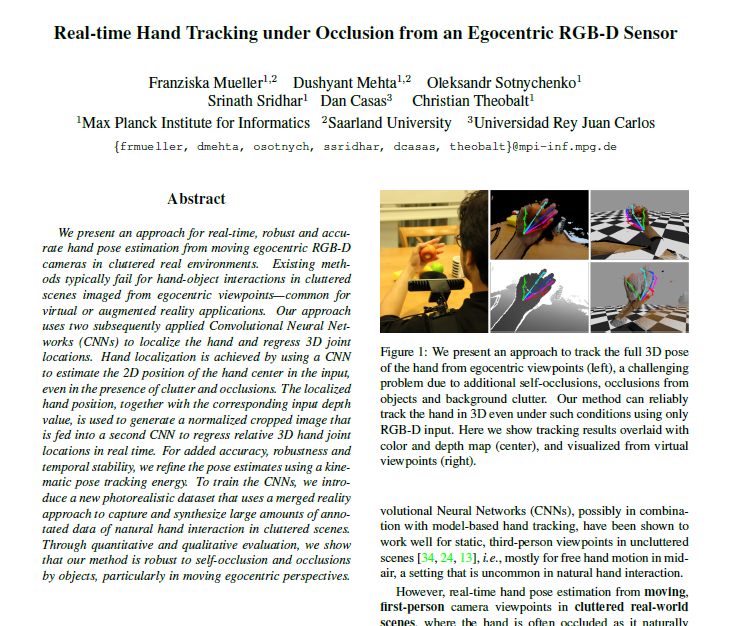

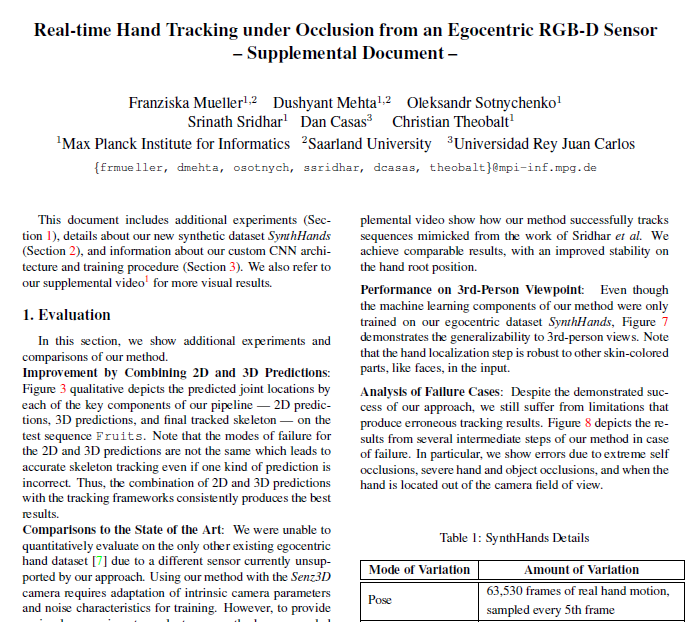

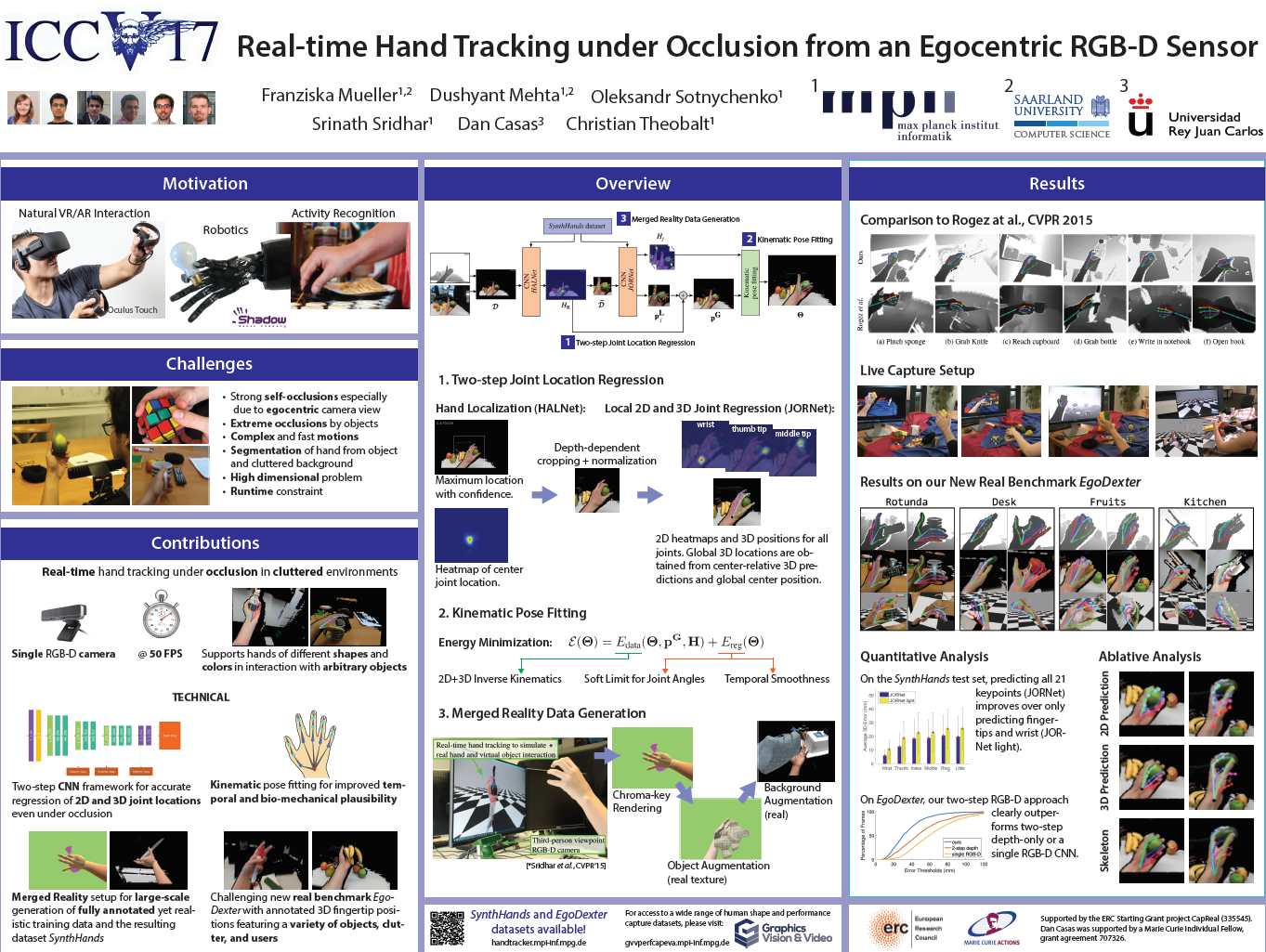

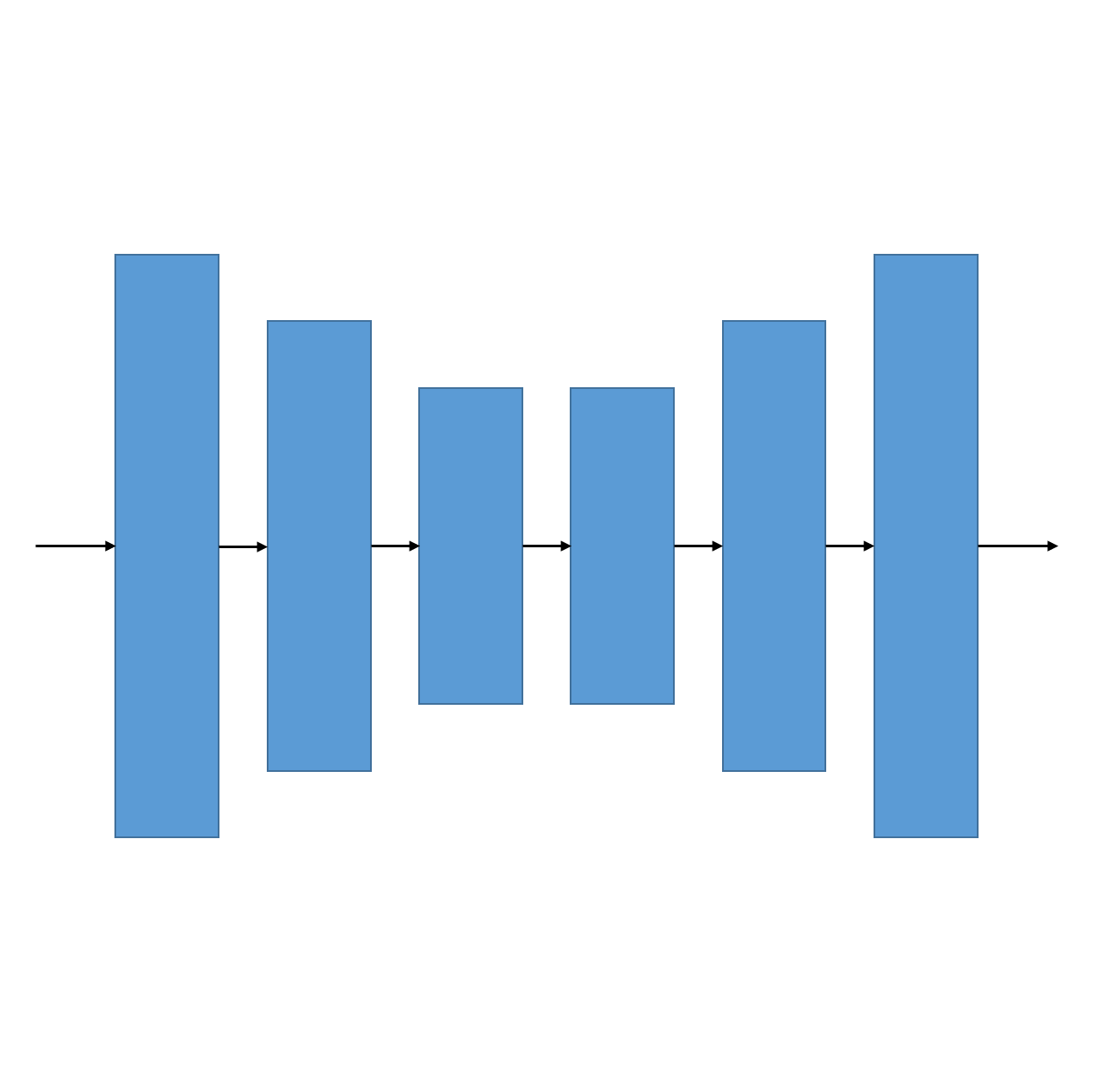

We present an approach for real-time, robust and accurate hand pose estimation from moving egocentric RGB-D cameras in cluttered real environments. Existing methods typically fail for hand-object interactions in cluttered scenes imaged from egocentric viewpoints, common for virtual or augmented reality applications. Our approach uses two subsequently applied Convolutional Neural Networks (CNNs) to localize the hand and regress 3D joint locations. Hand localization is achieved by using a CNN to estimate the 2D position of the hand center in the input, even in the presence of clutter and occlusions. The localized hand position, together with the corresponding input depth value, is used to generate a normalized cropped image that is fed into a second CNN to regress relative 3D hand joint locations in real time. For added accuracy, robustness and temporal stability, we refine the pose estimates using a kinematic pose tracking energy. To train the CNNs, we introduce a new photorealistic dataset that uses a merged reality approach to capture and synthesize large amounts of annotated data of natural hand interaction in cluttered scenes. Through quantitative and qualitative evaluation, we show that our method is robust to self-occlusion and occlusions by objects, particularly in moving egocentric perspectives.

Downloads

For acquiring access to the CNN model, please send an e-mail from your institutional mail address stating your full name and affiliation.Citation

@inproceedings{OccludedHands_ICCV2017,

author = {Mueller, Franziska and Mehta, Dushyant and Sotnychenko, Oleksandr and Sridhar, Srinath and Casas, Dan and Theobalt, Christian},

title = {Real-time Hand Tracking under Occlusion from an Egocentric RGB-D Sensor},

booktitle = {Proceedings of International Conference on Computer Vision ({ICCV})},

url = {https://handtracker.mpi-inf.mpg.de/projects/OccludedHands/},

numpages = {10},

month = October,

year = {2017}

}

Acknowledgments

This research was funded by the ERC Starting Grant projects CapReal

(335545).

Dan Casas was supported by a Marie Curie Individual Fellow, grant agreement 707326.