HandSonor: A Customizable Vision-based Control Interface for Musical Expression

Student Research Competition

Abstract

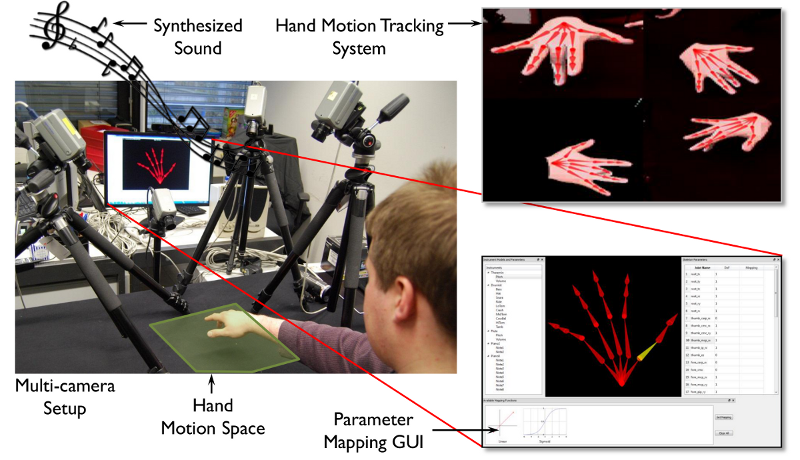

The availability of electronic audio synthesizers has led to the development of many novel control interfaces for music synthesis. The importance of the human hand as a communication channel makes it a natural candidate for such a control interface. In this paper I present, HandSonor, a novel non-contact and fully customizable control interface that uses the motion of the hand for music synthesis. HandSonor uses images from multiple cameras to track the realtime, articulated 3D motion of the hand without using markers or gloves. I frame the problem of transforming hand motion into music as a parameter mapping problem for a range of instruments. I have built a graphical user interface (GUI) to allow users to dynamically select instruments and map the corresponding parameters to the motion of the hand. I present results of hand motion tracking, parameter mapping and realtime audio synthesis which show that users can play meaningful music using HandSonor.

HandSonor in Action

Playing a 7-Key Piano using HandSonorA user playing a 7-key discrete mapping of a piano using nothing more than a sheet of paper.The song played is the English lullaby, "Twinkle Twinkle Little Star". Download: [ Low-res (25 MB) | Hi-res (270 MB) ] |

|

Playing the Theremin using HandSonorA user playing a continuous sound theremin mapping.The song played is the Czech folk song, "Skákal pes pres oves". Download: [ Low-res (33 MB) | Hi-res (345 MB) ] |

|

Hand Motion Tracking System in actionHand Motion Tracking System in action..Download: [ Low-res (27 MB) | Hi-res (114 MB) ] |

|

Mapping Continuous Instrument ParametersUsing the parameter mapping system GUI of HandSonor to create continuous mapping schemes. Two examples are shown.Download: [ Low-res (34 MB) | Hi-res (113 MB) ] |

Related Pages

- Interactive Markerless Articulated Hand Motion Tracking Using RGB and Depth Data, ICCV 2013 (webpage)